Modern C++ programming very much abstracts what is happening “under the hood” – i.e. what code the CPU is actually executing. This is nice for the programmer … if it works well. Unfortunately, C++ also introduces a stability problem in embedded systems. Many programmers are unaware of this problem. This post gives some background, explains the problem and presents a solution.

I believe this is a must-read for every programmer using C++ in embedded systems!

The problem I am talking about is that memory allocation routines are used from both the main program as well as from interrupt service routines. In many cases, this disastrous problem makes it into the final product, rendering the system unstable. Crashing or malfunction is random and rare enough to be very hard to find, but not rare enough to ignore.

In fact there are likely millions and millions of systems with this problem out there right now.

Background

In the early days, programs for embedded devices were written in assembly language. Given that this was tedious, error-prone and very-much dependent on the target CPU, developers switched quickly to a higher-level language once C compilers for embedded systems became available.

Moving from Assembler to C

The first widespread choice for high-level programming was C, which is still used in many, if not the majority, of applications. In C, the programmer does not have to think about the machine level, i.e. the individual instructions for the target CPU. The benefit is that the developer can focus on the actual algorithm or problem to be solved. Compared to assembly language, C source code is easier to read, faster to write, much more compact, and far easier to maintain.

But there is a cost to relinquishing control in that the programmer naturally becomes more distanced from the machine and what is happening in the target system. Sure, things are easier to write, but there is much less control over how the compiler translates the source code into the instructions that get executed.

Moving from C to C++

Moving from C to C++ takes this one step further. The level of abstraction is higher and the program source code becomes even more compact. At the same time, exactly what happens in the target system becomes even less clear. In many cases, the functions being called are no longer visible to the programmer.

Memory allocation – the heap

In a C program, it is obvious to an application programmer if and where and how he is using the heap, i.e. the piece of memory provided to the heap management code that satisfies dynamic memory requests. This is basically an allocation routine and a routine to free a memory block, such as alloc() and free().

Fragmentation

There are some problems with heaps, which is why many applications avoid using them. One of the biggest issues is fragmentation. This really only becomes an issue if there is not a lot of memory present, so again this is not a problem when developing PC applications. In embedded systems, fragmentation can lead to a situation where the system runs out of memory, not because there is no memory available but because there is no block of sufficient size available due to fragmentation. While this can be a serious problem, most programmers are aware of this, and it is not the focus of this article.

Reentrant code

Heap management routines are not reentrant, as they manage a global pool of memory. This means that heap management routines need to be serialized, to make sure a call to one routine is not interrupted (in a critical moment) by another call to the same or another heap management routine.

For threads in a multi-task environment (RTOS), this can be done easily, using a semaphore or mutex.

Interrupts

When programming desktop C++ applications, this is not a problem, as the application program does not deal with interrupts. These are taken care of by the underlying Operating System (Note: When using signal handlers, similar rules to interrupts apply).

The embedded system developer has no such luxury. Interrupt service routines (ISRs) are part of the application program.

This is where things become tricky and go wrong in many cases.

The Problem

An ISR interrupts the normal program execution. If it interrupts a heap operation and then uses a heap operation itself, it can create a system instability, such as assigning the same block of memory twice, to the ISR requesting it as well as to the normal program requesting it. This type of conflict creates unpredictable behavior including system instabilities and crashes, so potentially catastrophic behavior.

This issue will rarely show up in testing. A bug then makes it into the final product making the system unstable, with random crashing and a cause that is virtually impossible to reproduce for analysis.

Even once it becomes obvious that there is a problem, finding the cause is like finding a tiny needle in an enormous haystack.

The solution

As in many cases, the solution is quite obvious, once the problem is fully understood.

The hard way

The hard way is to make sure your application does not call heap functions from within an ISR. This can be done by using C only in ISRs and at the same time making sure that there are no calls to alloc() or free() functions.

Unfortunately, this is not so easy, especially as the ISR will most likely call other functions, which now also need to be guaranteed to be “heap-free”.

Another problem is that even if your code manages to do so, another team member might introduce C++, creating a problem.

So this is easier said than done.

But one recommendation if you decide to go that route:

You should make sure that in a debug build, your assumption that memory management routines are not called from an interrupt context (ISR) is actually correct, by having a piece of code in the “Lock” routine which looks at the interrupt status of the CPU.

This way a critical problem is not avoided, but can be found during development (“on the desk”) rather than make it into the final product.

The easy way

The easy way to make sure that memory allocation functions are interrupt-safe is to disable interrupts during critical parts of the heap operations.

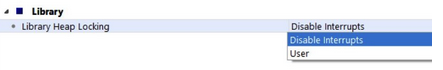

In Embedded Studio, this is the default behavior.

In other words, this problem does not exist when using Embedded Studio.

It simply works!

P.S.:

Embedded Studio also offers another locking option, called “User”.

Here it is user’s responsibility to control the heap locking, for tuning the system for multi-core CPUs or highly time-critical applications.